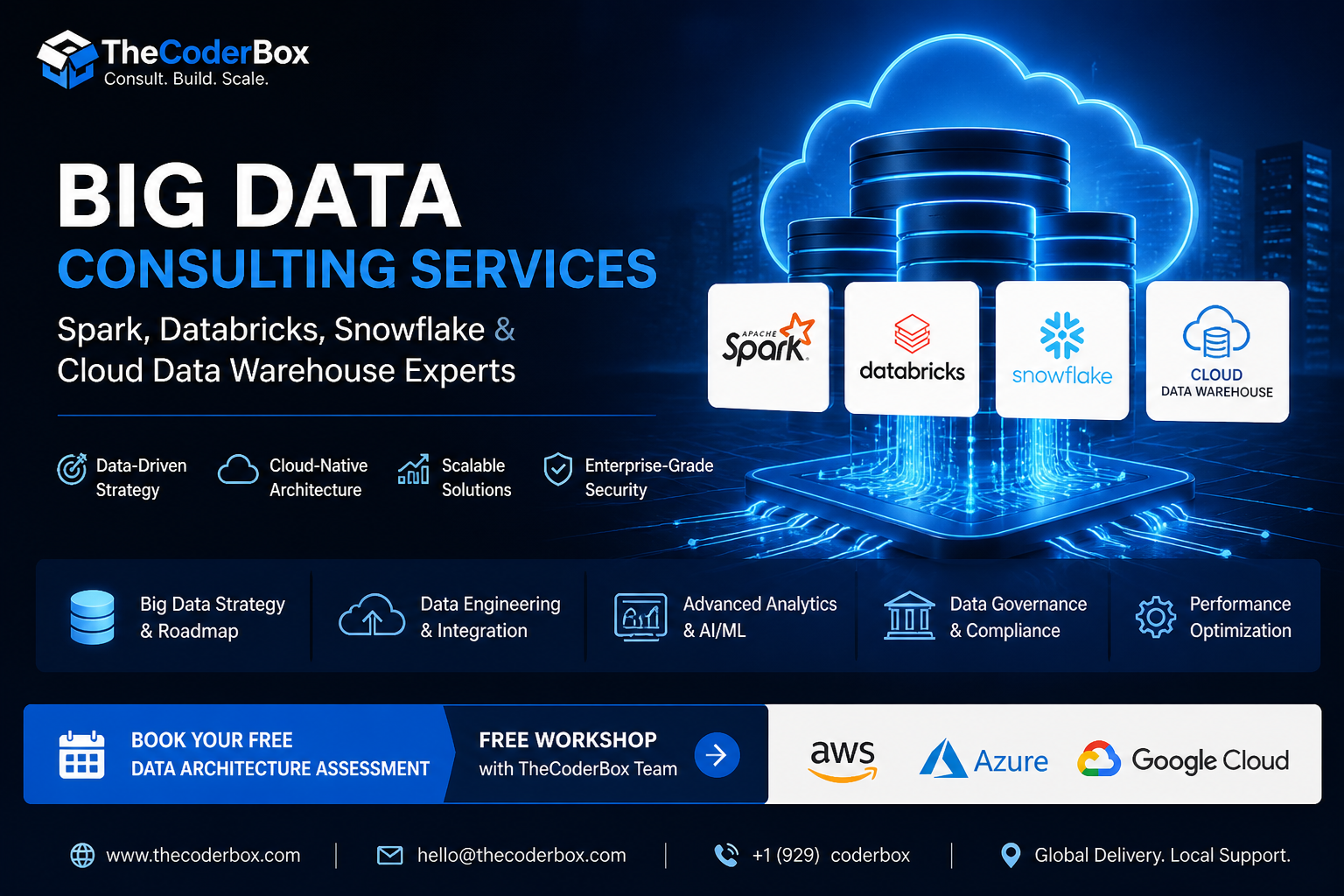

Your data is growing faster than your current tools can handle. Moreover, fragmented pipelines and slow dashboards cost your business every single day. Big data consulting services from TheCoderBox transform that chaos into governed, high-performance infrastructure. Furthermore, our certified engineers build production-grade systems on Apache Spark, Databricks, and Snowflake. Consequently, your teams unlock insights faster and operate at enterprise scale.

Additionally, we partner with organizations across financial services, healthcare, e-commerce, and media. Therefore, our data engineering consulting frameworks are proven across diverse regulatory and operational contexts. As a result, you receive a solution designed for your industry, not a generic template.

What Is Big Data Consulting and When Do You Need It?

Big data consulting services cover strategy, architecture design, and hands-on data engineering delivery. Specifically, consultants assess your infrastructure, identify gaps, and build scalable pipelines. Furthermore, they align every technical decision to measurable business outcomes.

Signs Your Business Has Outgrown Basic Analytics Tools

Moreover, recognizing the warning signs early prevents costly data debt from accumulating:

- Slow reporting: Dashboards take hours to refresh, delaying executive decisions.

- Fragmented silos: Teams maintain separate spreadsheets with conflicting figures.

- No real-time view: You cannot observe customer behavior or fraud as it happens.

- Infrastructure failures: Databases crash during peak load, halting operations entirely.

- Compliance backlog: Regulatory reports take days rather than automated hours.

The 5 Vs of Big Data: Volume, Velocity, Variety, Veracity, Value

Consequently, understanding the 5 Vs defines exactly what big data consulting services must solve for your organization:

- Volume: Petabytes of transactional and behavioral data exceeding legacy database limits.

- Velocity: Streaming events from IoT, APIs, and clickstreams arriving in milliseconds.

- Variety: Structured tables, JSON logs, images, and unstructured documents coexisting.

- Veracity: Ensuring data quality, deduplication, and consistency at every pipeline stage.

- Value: Transforming raw inputs into decisions that produce measurable revenue impact.

On-Premise vs. Cloud Data Architecture: Total Cost Comparison

Many enterprises still debate whether to maintain on-premise infrastructure or migrate to the cloud. However, total cost of ownership consistently favors cloud-native data platforms. Specifically, on-premise deployments require upfront capital expenditure, hardware refresh cycles, and dedicated ops teams. In contrast, cloud platforms offer consumption-based pricing and elastic horizontal scaling.

Furthermore, our cloud data warehouse migration services include detailed financial modeling before any migration begins. Therefore, you understand the exact break-even point and five-year cost projection upfront. Additionally, we account for egress fees, reserved instance discounts, and compute optimization opportunities.

AWS, Azure, and Google Cloud for Big Data: Platform Comparison

Consequently, platform selection significantly impacts both cost and long-term agility. AWS excels in ecosystem breadth, offering Redshift, Glue, Kinesis, and EMR natively integrated. Moreover, Azure is preferred for enterprises already running Microsoft workloads and Active Directory. Similarly, Google Cloud leads in ML integration, with BigQuery ML and Vertex AI tightly coupled. Therefore, TheCoderBox conducts unbiased platform assessments aligned to your specific workload profile.

Hybrid Cloud Data Architecture for Enterprise Compliance Requirements

Additionally, regulated industries often require hybrid architectures that span on-premise and cloud zones. For example, financial services firms must keep PII in sovereign regions while running analytics in the cloud. Furthermore, our architects design hybrid patterns that satisfy GDPR, HIPAA, and SOC 2 mandates simultaneously. As a result, you achieve cloud agility without compromising your compliance posture.

|

📋 Data Architecture Assessment — Free Workshop Book a complimentary 90-minute architecture workshop with TheCoderBox engineers. We review your current stack and deliver a prioritized modernization roadmap. Schedule Your Free Workshop →https://thecoderbox.com/contact-us/ |

Big Data Engineering Services We Offer

Our big data consulting services span the full data engineering lifecycle from raw ingestion to governed analytics. Specifically, we deliver architecture design, pipeline engineering, and ongoing optimization. Moreover, every engagement includes documentation, testing, and knowledge transfer to your team.

Cloud Data Warehouse Implementation: Snowflake, BigQuery & Redshift

Cloud data warehouses are the analytical core of every modern data platform. Therefore, selecting and configuring the right warehouse dramatically impacts query performance and cost. Our Snowflake implementation services cover multi-cluster architecture, data sharing, and query optimization strategies.

Snowflake Architecture Design, Optimization & Cost Management

Furthermore, Snowflake deployments require careful virtual warehouse sizing to avoid runaway compute costs. Consequently, our Snowflake specialists implement auto-suspend policies, clustering keys, and materialized views. As a result, most clients reduce Snowflake spend by 30–60 percent within the first 90 days. Additionally, we configure Snowflake Resource Monitors and query tagging for full cost transparency.

Google BigQuery for Real-Time Analytics and ML Integration

Moreover, Google BigQuery delivers serverless analytics with native BigQuery ML capabilities built in. Specifically, our engineers configure partitioned and clustered tables for sub-second query performance. Furthermore, BigQuery integrates directly with Vertex AI, enabling inline ML model scoring at petabyte scale. Therefore, your data scientists and engineers work from a single unified platform without data movement.

Data Pipeline & ETL/ELT Engineering

Apache Spark & Databricks Pipeline Architecture

Apache Spark remains the industry standard for distributed large-scale data processing. Furthermore, our Databricks consulting company practice builds production Spark pipelines on Databricks with Delta Lake underneath. Specifically, we configure auto-scaling clusters, job orchestration, and Delta table compaction. Consequently, your pipelines handle petabyte-scale workloads reliably without manual intervention.

dbt (Data Build Tool) for Transformation Layer Modernization

Additionally, dbt brings software engineering discipline to your SQL transformation layer. Therefore, every transformation becomes version-controlled, unit-tested, and self-documenting in the data catalog. Furthermore, dbt enables modular, reusable SQL models that replace fragile stored procedures. As a result, your analytics engineering team ships changes confidently and rolls back instantly when needed.

ELT vs. ETL in Modern Data Stacks: Which Is Right for You?

Moreover, the ELT versus ETL decision depends on your warehouse compute capabilities and data volume. However, modern cloud warehouses like Snowflake and BigQuery strongly favor ELT architectures. Specifically, ELT loads raw data first, then transforms within the warehouse using its native compute. Consequently, you retain full historical raw data and avoid costly pre-load transformation bottlenecks.

Data Lake & Lakehouse Architecture

Delta Lake and Apache Iceberg: Open Table Format Comparison

Furthermore, open table formats like Delta Lake and Apache Iceberg bring warehouse features to data lakes. Specifically, both support ACID transactions, schema evolution, and time-travel queries on object storage. However, Delta Lake integrates most deeply with Databricks, while Iceberg is more engine-agnostic. Therefore, our architects evaluate your existing tooling before recommending a table format strategy.

Data Lakehouse vs. Data Warehouse: When to Choose Each

Additionally, data lakehouses combine the flexibility of lakes with the governance of warehouses on one platform. Consequently, they support structured SQL analytics, unstructured ML workloads, and streaming simultaneously. However, for pure SQL analytics with strict SLA requirements, a dedicated warehouse still delivers superior performance. Therefore, TheCoderBox architects the right pattern based on your query types, data variety, and team capabilities.

Real-Time & Streaming Data Engineering

Apache Kafka, AWS Kinesis & Google Dataflow Implementations

Real-time data processing is now critical for fraud detection, live pricing, and personalization. Therefore, our engineers implement Apache Kafka, AWS Kinesis, and Google Dataflow streaming pipelines. Furthermore, we configure exactly-once delivery semantics to ensure no event is lost or duplicated. Consequently, your downstream systems receive clean, ordered event streams at millisecond latency.

Event-Driven Architectures for Fraud Detection and Live Personalization

Moreover, event-driven architectures enable your systems to respond to business events in real time. Specifically, financial institutions use Kafka-powered pipelines to flag suspicious transactions within 200 milliseconds. Similarly, e-commerce platforms personalize product recommendations dynamically based on live browsing behavior. Furthermore, these architectures scale horizontally, handling millions of events per second without degradation.

|

🔍 Request a Big Data Architecture Review for Your Organization Our senior engineers analyze your current data stack and identify the highest-impact modernization opportunities. No commitment required. Book Your Free Architecture Review → https://thecoderbox.com/contact-us/ |

Why TheCoderBox for Big Data Consulting Services?

TheCoderBox is a specialist big data consulting services firm with certified engineers across all major cloud platforms. Furthermore, we combine deep technical expertise with commercial alignment to deliver measurable ROI. Therefore, every engagement focuses on outcomes, not just technology delivery.

- Multi-Cloud Certified: AWS, GCP, Azure, Snowflake SnowPro, and Databricks Partner Network accredited.

- Proven Cost Savings: Average 35% infrastructure cost reduction for clients migrating to cloud warehouses.

- Compliance-Ready: Purpose-built hybrid architectures for GDPR, HIPAA, and SOC 2 environments.

- Flexible Engagement: Project-based assessments, fractional CDO advisory, or dedicated team augmentation.

- India-Based Talent Pool: Access senior engineers when you hire data engineer India through our vetted, client-embedded talent network.

Start Your Data Modernization Journey Today

Ultimately, your data is your most valuable competitive asset. However, it only creates value when it flows reliably, scales effortlessly, and remains fully governed. Therefore, investing in professional big data consulting services delivers compounding returns across every business function. Furthermore, TheCoderBox brings certified expertise, proven delivery frameworks, and flexible engagement models. Consequently, you move from fragmented data debt to a governed, high-performance platform faster. So, contact TheCoderBox today and take the first step toward modern data infrastructure.

|

📅 Book Your Free Data Architecture Workshop — TheCoderBox.com Join 150+ enterprises that trust TheCoderBox for Spark, Snowflake, Databricks, and cloud data warehouse delivery. Get Started → https://thecoderbox.com/service/data-analytics/ |